I’ve noticed that often the thing preventing someone from forecasting, estimating, or developing a model, has more to do with a gap in knowledge/access to a framework or terminology as opposed to the actual analytical/quantitative skills required.

What is forecasting?

Forecasting is attempting to predict the future.

We can end here, seriously!

Forecasting is the art of using mathematical tools and information we have to attempt to express a potential future.

From probability theory, we know that any event has a likelihood associated with its happening. Anything that you can think of either can happen or not with a whole more than fifty shades of likelihood.

0 <= P(a) <= 1

When you get in your car in the morning and plot the route to your office, Google Maps will give you an estimated time of arrival (ETA), based on distance and traffic information.

However, you know that there’s always a chance that you won’t make the predicted ETA (estimated time of arrival). What if you get a flat tire? What if there’s an accident along the route?

We forecast daily in our personal lives like when we tell our friends we’ll be arriving in 10mn, or when we set an alarm in the morning so that we might make it to an appointment on time.

Based on the speed at which we drive/walk and our knowledge (or lack thereof) of how long it takes us to get ready, we have an idea of how long it will take us to go somewhere.

And still, we know that sometimes unforeseen events ruin our carefully crafted plans. We all know someone who has a terrible notion of time and is extremely bad at giving estimates.

All the assumptions around estimating carry a degree of uncertainty associated with them, and all the events that might happen.

In our complex chaotic world, it’s often impossible to quantify all these uncertainties and variables that might affect the outcome.

However, it’s often a great exercise to imagine an ideal world, where we’re able to abstract a lot of this complexity so that we know that if we drop a very round stone from a bridge with a certain height we can use certain formulas from physics class to calculate the time it takes for the stone to reach the bottom, like:

time = sqrt ( 2 * height / 9.82 ), with g= 9.82m/s2

But, if you had a great physics teacher you know that all the formulas come with caveats and good discussions associated with them. We know that some “formulas” only apply if several assumptions are made. In the case of dropping a stone and using the formula above we are making some of the following assumptions:

- there isn’t wind at play on that day

- we manage to drop the stone without imparting any speed to it

- the stone has a negligible surface area and won’t experience air resistance

- the g constant is accurate for the place where we’re dropping the stone

However, we know that by using this formula and this mathematical model with these assumptions, we can get a good estimate of how long the stone would take to reach the ground. It would almost certainly be more accurate than if we would just guess a number at random.

This is the same principle we use when we forecast. We attempt to understand the system and to make a model of it using mathematical models. Any model we use will have a measure of accuracy and precision associated with it and several caveats. Very often there are multiple tradeoffs to be made when picking a model balancing between simplicity, usability, complexity, and ability to extrapolate/reuse the model vs. overfitting.

Accuracy and Precision

Good discussions, critical thought, and willingness to learn and explain observations are foundational skills for building good models and forecasting in general.

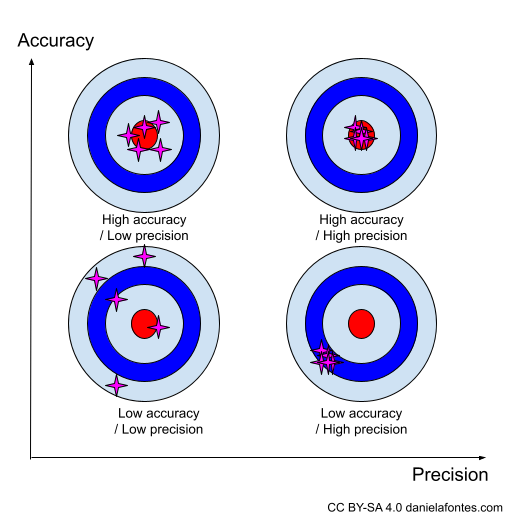

One of the most important tools to evaluate your model is trying to understand its accuracy and precision.

These two terms are often used interchangeably in everyday colloquial speech. But in a scientific context, they are not synonyms.

Accuracy represents how close to the real value you are.

Precision represents close measurements of the same item/input that are close to each other.

The best and most famous way to observe this is with a bullseye and some darts (you can read more about accuracy vs. precision here https://www.antarcticglaciers.org/glacial-geology/dating-glacial-sediments-2/precision-and-accuracy-glacial-geology/).

Often, when you have a model with low accuracy and high precision you tend to have what we call a “systematic error”. This happens when somewhere along the process the tools or the process that you use introduce errors.

How to forecast?

You can look at the system like a complex landscape you want to capture in a painting. Like a landscape painter there’s you’ll always be limited by your tools, paintbrushes, watercolors, paper/canvas.

You’ll be building a representation with the tools you’ve available.

When building a forecast, often these tools are mathematical formulas you know (watercolors), data (the landscape you see through your eyes), and tools that can be as simple as a spreadsheet software like Excel, or more sophisticated frameworks R/Python/Matlab which in this analogy would be the brushes and paper if you’re using AI, or iterating on refining a certain model that could be understood as your practice as a landscape painter.

Here are some resources to help you improve your skills:

- How to Choose the Right Forecasting Technique – What every manager ought to know about the different kinds of forecasting and the times when they should be used. by John C. Chambers, Satinder K. Mullick, and Donald D. Smith (https://hbr.org/1971/07/how-to-choose-the-right-forecasting-technique) This in-depth article provides a great systematic overview of different forecasting techniques and how to pick the right tool.

- Ahead of the Curve: A Commonsense Guide to Forecasting Business and Market Cycles, Joseph H. Ellis (https://www.amazon.com/Ahead-Curve-Commonsense-Forecasting-Business/dp/1591396913) This book does a great job explaining and simplifying common concepts, especially around leading and lagging indicators. Even though the focus is trading and finance, this book does a great job conveying some of the intuition that is needed to pair with data.

- Microsoft Excel tutorial to create a forecasting model using historical data. https://support.microsoft.com/en-au/office/create-a-forecast-in-excel-for-windows-22c500da-6da7-45e5-bfdc-60a7062329fd This is a very quick resource to get your hands dirty and try to manipulate some data for yourself.

Tips to forecast

Don’t be afraid of using your business experience

Most sources of information are fair game when forecasting as long as you try your best to disclose assumptions and keep attempting to refine the quality and relevance of your inputs.

Consider the purpose of your forecast and whether a qualitative method can/should be used

Using Market studies, opinions from experts (Delphi), input from executives (experts), and input from the sales/marketing team can sometimes be beneficial, especially when there’s high uncertainty and little data available. Here you’re taking into account opinions and intuitive/educated guesses. This might look like a rough estimate of how many sales you expect per month, or what the growth curve of new users of the product looks like.

Try to understand the past

You can’t use past performance as a predictor of the future with a high degree of certainty but the saying: “History may not repeat itself. But it rhymes.” which is often attributed to Mark Twain captures the importance of looking into past events to extrapolate patterns. After all, historical events are often your only source of data.

Iterate on your model

Iterations when it comes to forecasting are the manifestation of the learning process in your model. As you gain more information, or find what works and what doesn’t work take the opportunity to review your model.

Using AI to forecast

You can use algorithms and AI techniques to forecast. From deep learning models to evolutionary algorithms and Bayesian networks, there are many techniques at your disposal. Still, depending on the technique you use, you might have to overcome several challenges and make certain tradeoffs. One common tradeoff, especially associated with deep learning models is explainability.

Access to large amounts of data has been another common problem in the past. However, recently we’re seeing the rise of more and more techniques to extrapolate and re-train general purpose models.

I hope this article helps make forecasting look slightly more approachable. It’s no secret I love forecasting and trying to understand and quantify risk and uncertainty. Having a good relationship with uncertainty and risk is a great tool for any professional and every business. Being able to make assumptions and reason with little information is the cornerstone of learning and evolving.

I’ve had colleagues utter “But at the end of the day, these are just guesses, right?” or “This is all just made-up numbers”. If we try to demystify this topic, the simplistic answer is: yes. However, we would be missing out on a lot of nuance. Forecasting is a commitment to a (more or less) systematized approach. At the end of the day, we want a process, not a product that supports planning and helps align goals. Forecasting is opening the door for discussion and revision.

For me, modeling, forecasting, and estimating is a very positive learning process. But I understand the negative associations, the fear of making mistakes, of being held accountable for a past recommendation. And while learning can be painful at times, learning together is an amazing experience.